Automotive manufacturers and suppliers—including traditional manufacturers (such as Audi), suppliers (e.g., Bosch and ZF), and new entrants (Nvidia plus numerous start-ups)—increasingly are pursuing artificial intelligence (AI) as the central feature of their automated driving systems and to take over the traditional role of the human driver in automated vehicles. While even top experts in the field often disagree about how to define artificial intelligence, understood most broadly, AI includes all possible approaches to simulate the actions of a rational, intelligent agent (such as a person). The general concept of intelligence remains elusive, and thus artificial intelligence is especially so.

Depictions of AI in television and movies often shape popular conceptions of AI—from ‘Lieutenant Commander Data’ from Star Trek: The Next Generation, “Hal” from 2001: A Space Odyssey, ‘ADAM’ from the Star Wars canon, and the androids from the Terminator films. Such highly-capable AIs with quirky, human-like personalities remain confined to science-fiction, at least for now. In the real world, AIs do not make compelling fictional characters, but they are becoming increasingly useful in service to humans.

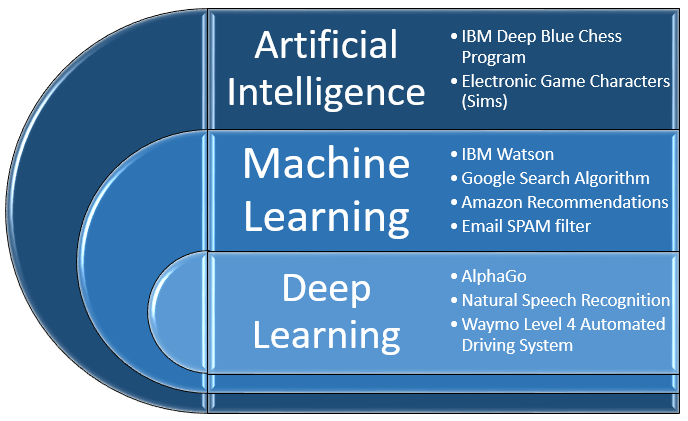

Artificial intelligence, as a distinct field of study, emerged in the 1950s concurrent with the invention of digital computers. The earliest methods of simulating intelligent decision-making are now commonly known as symbolic AI (or good old-fashioned AI to some insiders). Symbolic AI includes any programming methods that use symbols (such as letters and numbers) to describe rationally-determined, rule-based operations. Considering that this represents most typical computer programs, many in the field do not recognize symbolic approaches as “real” AI. Nonetheless, such programs are often popularly awarded the status of ‘artificially intelligent’ if they perform some task previously thought to require human intelligence. For example, in 1996, IBM’s Deep Blue chess-playing computer (usually described as an AI) defeated the reigning world champion using exclusively symbolic, rule-based programming (see Figure 1 for examples of different types of deployed AI systems).

Figure 1: AI, Machine Learning, and Deep Learning Implementations

Symbolic AI approaches have the benefit of providing results that human can quickly read, predict, and validate; however, there are limits to such rule-based programming, namely applications with well-defined parameters (such as the rules of chess). When it comes to complex functions for which input data can be highly variable, parameters loosely defined, and ideal solutions unclear, however, symbolic, rule-based approaches are rarely sufficient. In these situations, programmers often employ a branch of AI known as machine learning.

With machine-learning techniques, programmers establish general relationships between potential inputs and outputs concerning the program’s end-goal (known as a “fitness function”). The operational details of the algorithm are initially left unresolved. Instead of specific operational rules, the AI is programmed to learn the relationships between inputs and outputs when presented with a set of training data. The training data includes previously validated input, output, and operational variables as necessary to allow the algorithm to establish functional relationships (at least, within a degree of certainty). A primary machine-learning process is often similar to regression analysis in statistics, but it might use advanced analytical methods or handle large, inter-related datasets that would be difficult or impossible for a human to comprehend and manipulate with traditional regression approaches.

Software developed using basic machine-learning techniques is now commonplace; however, such AI is limited to applications with structured data and known critical parameters. For example, one familiar machine-learning application is the Google search algorithm, which is continually evolving based on previous searches and links clicked on by users.

While machine-learning has revolutionized software in cyberspace, AI has had minimal success in passing as an intelligent agent when operating in the three-dimensional space inhabited by biological beings that are influenced not just by physical constraints, but also by social, psychological, sociological, regulatory, and other complex factors not readily perceived by machine vision. In the real world, tasks easily performed by even a two-year-old human (such as identifying a cat) are surprisingly difficult for AI. To the extent that software-developers have achieved success with highly-advanced applications such as cat-identification, it has been accomplished mostly through the use of a sophisticated type of machine-learning known as “deep learning.”

Deep learning—along with its operational equivalent “cognitive computing”—describes a variety of programming and processing methods predicated on an abstracted model of the human brain and nervous system. Scientists and medical researchers understand very little about how the human brain operates, but we have determined that its workings have something to do with the way electrical currents travel through parallel networks of specialized cells called neurons. Since the emergence of the AI field, researchers have used human intelligence as a standard for comparison, and some AI researchers have focused on designing AI hardware and software inspired by the most current understanding of the human brain.

In overly simple terms, the theory of using artificial neural networks for AI goes something like this:

Even though we do not know how the human brain works, we know that, somehow, it does work. Thus, if we build an electronic brain that sort-of looks like an organic brain, perhaps it will operate similar to a human brain and learn complex things, even if we still do not know how.

To some extent, it works.

In certain situations, machine-learning algorithms modeled on biological neural networks have proven the ability to learn how to efficiently optimize a given fitness function—even when provided minimal information about relationships between input and output data. To be clear, AI researchers have not yet created anything nearly as capable as a human brain; however, AIs have achieved functionality that, until recently, most experts thought was decades away, if possible at all. The ability of today’s AI software to recognize cats, identify individual faces, understand spoken words, translate between languages, and complete many other useful tasks are all made possible through deep learning.

Deep learning methods are already being used, in a limited capacity, to develop software for today’s vehicles (e.g., pedestrian detection systems). Automated driving systems underpinning automated vehicles will likely require extensive use of deep learning and other types of artificial intelligence. If automated vehicles become widely adopted, this portends a fundamental change for development and validation of software for automotive systems. Current industry validation frameworks (such as ISO 26262) are not directly applicable to software rooted in deep learning because such software is inherently opaque and non-deterministic. Alternative validation methods are possible, but the complex relationships between industry, regulators, and consumers make it difficult to predict how any new processes will be received and implemented.

Whatever the future holds, it is sure to be interesting, and AI-driven vehicles will be operating on roads near you—interacting with vehicles driven by humans and other AI systems—very soon.

Learn more and gain greater insight into Artificial Intelligence Applications to Driver Assistance and Vehicle Automation at CAR’s next Industry Briefing.